2022-9-19 18:0:0 Author: research.nccgroup.com(查看原文) 阅读量:22 收藏

Modern organizations evolved and took the next step when they became digital. Organizations are using cloud and automation to build a dynamic infrastructure to support more frequent product release and faster innovation. This puts pressure on the IT department to do more and deliver faster. Automated cloud infrastructure also requires a new mindset, a change in the approach about change and risk from them. Depending on the way that people use the technology though, it can reduce the risk and improve the quality of the infrastructure.

When a company is planning to migrate their infrastructure and applications to cloud or want to create a new service, the IT department, Cloud or DevOps team, will have the task for creating the necessary automated infrastructure deployment with keeping security in mind. As security is more and more important, quality should be built in instead of trying to test quality. This is a different way than previously done. There are a lot of moving pieces and possibly many different teams might have to work together. It is difficult to know all the parts of the environment and design all security controls in every step in the deployment or through the automated deployment.

The good news is that there are a lot of information and tools available today for anyone who would like to automatically deploy infrastructure resources with built-in security in the cloud by developing secure infrastructure as a code. This article aims to make an attempt to collect the main starting points, creating a guide on how to integrate security into infrastructure as a code and show how these security checks and gates, tools and procedures secures the infrastructure by mentioning free and/or open-source tools wherever possible.

What is Infrastructure as Code (IaC)?

A nice definition from Kief Morris’s book, Infrastructure as Code Dynamic Systems for the Cloud Age, that infrastructure as code “is an approach to infrastructure automation based on practices from software development. It emphasizes consistent, repeatable routines for provisioning and changing systems and their configuration. You make changes to code, then use automation to test and apply those changes to your systems.” [49]

It comes with benefits such as cost reduction, increased deployment speed, scalability and consistent, reliable configurations, visible governance, security and compliance controls. One paradigm comes with it is immutable infrastructure that basically means no changes are made to the server after deployed. If there is a new version of web server available and needs to be updated, then a new deployment with the new configuration will be deployed. This will make sure the same resources and settings will be deployed every time there is a deployment. The security of the infrastructure is increased by shifting left (early in the development phase) security as much as possible and baked into.

Resources for getting started

Keeping security in mind could not be easier today. There is tremendous information available nowadays on the Internet about how to build something and make it secure. There are freely available documentations, articles, blog posts, conferences, meetups, mailing lists, newsletters [52], discords, online tutorial and educational videos, books, trainings with certifications, benchmarks, frameworks and blueprints by cloud providers and security engineers with best practices.

A good start is the well-architected frameworks released by each main cloud providers (Amazon Web Services (AWS), Azure, Google Cloud Provider (GCP)) and the blueprints for a stack or a service to achieve resilience and security. [1] [2] [3] [4] [5] Frameworks describe the key concepts, design patterns and best practices, while the blueprints are complete, deployable solutions.

Cloud providers also frequently release blog posts on securing services, basic implementations and how their services work. [6] [7] [8]

Some great examples of this include:

- How to integrate Policy Intelligence recommendations into an IaC pipeline [9]

- Protecting your GCP infrastructure with Forseti Config Validator part four: Using Terraform Validator [10]

- How to use CI/CD to deploy and configure AWS security services with Terraform [11],

- How to create an Azure key vault and vault access policy by using a Resource Manager template [12], [13] [14]

Threat modeling

As a first step after creating a systems’ architecture diagram, but before starting to develop IaC, a threat model in the early stage should be created. Use any of the well-known threat models or frameworks such as STRIDE (Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service and Elevation of Privilege) [15] to understand the threats, possible attack vectors and what necessary security controls need to be in place for prevention. With shifting left the security design and testing as much as possible throughout the lifecycle of infrastructure as code, one can save money on fixing security issues. Building security into in the early stages rather than later will be better as any modification would cost more, like rearchitecting the environment or breaking any parts of the system.

Microsoft has a free and publicly available tutorial about basic threat modeling [47], while the Draw.io [16] and Microsoft Threat Model [17] tools come in handy to draw the threat model and attack trees [18] and put everything in practice [54] [55] [57]. There is a specific tool called Deciduous [19] for creating a more comprehensive and interactive attack tree that could be used together with Sycamore [20] to save, edit and share it.

The Center for Internet Security (CIS) Benchmarks [24] and knowledge bases such as those available from Cloud Conformity [25], BridgeCrew [26] or DataDog [53] could help laying out the security foundation with the security controls that can be mapped to different threats. Using these recommendations with the threat model framework is the initial starting point. This can be extended with cloud specific list of attacks used in real cases like MITRE ATT&CK frameworks [21] and [22] Azure mapping [23].

An interesting case that I would bring your attention to is a 167 pages long threat model release with checklist about AWS S3 [27] that could be a good example to use.

There are videos, presentation slides, blog posts and whitepapers available from security and hacking conferences on the Internet to add more scenarios to the list of attacks and for deeper understanding. There is a hands-on video training showing the attack concepts and tools against multiple cloud providers by Beau Bullock [28], but there are cloud specific resources available such as Rhino Security Lab AWS privilege escalation attack paths [50], NetSPI Azure articles [51] or GCP attacks privilege escalation techniques [29] [30] by Dylan Ayrey, Allison Donovan and Kat Traxler.

Choosing Infra as Code Language

There are a couple of questions that need to be decided when developing infrastructure as code, including:

- Using declarative (define desired state of infra), imperative (define how to create the infra) or general purpose language (like python)

- Cloud agnosticism

- Support of tools and amount of scripting

Multiple options are available to choose from for developing IaC code. If you already know a programming language, then AWS CDK [66] or Pulumi [65] could be a choice. If not, then a language of a provision tool such as Terraform, CloudFormation, ARM or command line tools like AZ PowerShell module, gcloud, aws can be the winner. The good news is that all the IaC tools are supported by linters [46] and static analysers [45] that can be integrated with Integrated Development Editor (IDE) and Continuous Integration & Continuous Deployment (CI/CD) pipelines to continuously check security misconfigurations such as over permissive rules or missing encryption.

Terraform recommends creating and using modules as they help break down the code into smaller units that focuses on specific area, easier to handle and can be reused. There is a registry/repo with already written modules by cloud providers for Terraform, too.

Adding Identity and Access Management (IAM)

When a cloud infrastructure made by multiple services and they are interacting with each other, or a user needs to perform certain administrative task by assuming a role, then they will require IAM policies. They should be created with the least privilege principal using constraints such as resource constraints, condition constraints, access level constraints, because it is very easy to include more permissions that necessary. With great power comes great responsibility. This is very important, because the blast radius will be limited in the case of compromised credentials or a successful attack.

Fortunately, tools exist like I AM ZERO [31] and Policy Sentry [32] that can help in this task to add only those permissions that are absolutely required, hence achieving the least privileges principal. While Cloudsplaining [33] can be used for scanning existing AWS IAM policies for least privileges violations. In addition, there is a special tool called PMapper [34] (developed here at NCC Group!) that can be used for modelling AWS IAM policies and roles to visualise privilege escalation paths by running queries. AWSPX [35] will also help visualize effective access between resources. A similar tool called Pacu [36] will automatically look for and report any well-known roles that can be used in privilege escalation attacks. For Google Cloud, GCP Scanner [76] will show what level of access the credentials have.

In an existing GCP environment the tools called Gcploit [37] and gcphound [73] will be valuable to look for checking privilege escalation paths and automatically exploit these weaknesses, to help understand and validate weaknesses in your systems design. As for Azure, starting from Bloodhound 4.0 version, Azure Active Directory is supported. In addition, cloud providers have their own IAM analyser and suggestion built-in tools that can also show the effective permissions and if it is possible to do an activity or there is a lack of permission. For example, GCP has a built-in service [38] that with time will show you the unnecessary privileges that your role has and has not used for a while. AWS provides AWS IAM Access Analyzer.

CI/CD Pipeline Integration

In order to avoid repeating all the steps with our code manually every time there is a modification, Continuous Integration & Continuous Deployment Pipeline (CI/CD) pipeline integration will come in handy and solves this problem by helping in automate the steps. DevOps best practices can be integrated into the pipeline such as using Version Control System (VCS), pair review, SAST too will enhance a faster, automated deployment with baked in security. Pushing code into a VCS will enable backup and roll back option. Requiring pair review means the code will be checked by someone else before the new code is merged into the existing code and can be automated with policy as code checks. Running a Static Application Security Testing (SAST) tool will automatically check and report security issues in the code. SAST tools such as Checkov [39], Regula [40], Semgrep [41], tfscan [42], kics [48], tfsec [43], tfsec for Visual Studio Plugin and other linters can scan through the code while it is developed, before it is committed or merged, before and after it is deployed. Basically from the moment the code was typed until it is deployed and running, a range of security issues can be automatically checked and prevented.

Policy as Code

There should be an automated way to ensure that next time if someone updates the infrastructure code or creates new one, there will be no bad examples or misconfigurations introduced and instead best practices are followed. This would also remove some of the burden that comes from pair reviewing code. This automated way is what is known as Policy as Code, that is representing and managing policies as code to automatically enforce best practices and company wide controls. Azure has built-in policy as code and governance services with Azure Policy [64], Initiatives and Blueprints. There are two specific tools exists for AWS CloudFormation, they called cfn_nag [60] and AWS CloudFormation Guard [61]. GCP offers [62] Organizational Policy similarly like AWS Service Control Policy [63], but they live in the cloud providers space and cannot be integrated into the CI/CD pipeline.

There are open-source tools such as Open Policy Agent (OPA) [44] or Regula [40] that can be integrated into the CI/CD pipeline and can be run periodically to looking for any drifts.

Additional best practices such as using modules, naming convention and enforcing tags can further improve visibility, traceability and cost optimization.

Configuration Management

Although this part is not necessarily in scope, it is connected very closely and the next step. It should be noted that IaC will not include a configured software or application laying on top of some infrastructure, it will just provide the underlying infrastructure. Everything that comes after the base infrastructure deployment is finished, will be handed over and taken care by configuration management tools such as ansible [77], chef [78], puppet [79]. They will help automating the configuration settings from the above mentioned benchmarks and best practices. There are tools for configuration management settings review as well: InSpec [80], Serverspec [81], terratest [82].

Visualizing Infrastructure

Although the infrastructure is up and running, we are not finished yet. Visualizing the running cloud environment will help with the inventory, can be compared with the architect diagram for differences and can be used for further improving the threat model. This will help understanding and showing any gaps or missing threats in the existing environment and further polishing the initial threat model.

In case of Azure Resource Manager (ARM), there are Resource Visualiser [71] and ARMViz [72] tools available where the first one allows exporting the infrastructure. Google has Network Topology [70] and Google Architecture Diagram Tool [69]. AWS offers Neptune [67] for running infra and Perspective [68] which is more of an architecture diagram tool. Independent tools such as cdk-dia [83], cfn-diagram [84], cloudmapper [85] are able to create a diagram from the resources in the cloud environment, but they are static, point in time diagrams. On the other side, Fugue developer for cloud [86] connects to the environment and periodically reads and updates the diagram and warns about any misconfigurations.

Monitoring and Drift Control

Life does not stop here, because in case of an incident or problem, an emergency manual change can be introduced and worsen the security posture, especially if forgotten. Cloud monitoring, security posture management and drift control will help in these situations at the post deployment stage. Monitoring can be happening at cloud resource or configuration level as well. Tools work based on tags, completely scanning all the resources in the cloud environment, or scanning the state file of tools like Terraform. Rerunning tools could also show the differences between the deployed and original state and can be reapplied, but without automation, it’s less of an option. At cloud resource level driftctl [87] will come in handy, while for the actual configuration drift monitoring can be taken care by InSpec [80], Serverspec [81], terratest [82]. Resources deployed via Azure Blueprints could automatically remediate the modified resources back to the original layout. When the cloud environment reaches a certain point, Cloud Security Posture Management (CSPM) tools such as OPENCSPM [74] or magpie [75] could be the next step as they bring things into another level. They include resource inventory, custom and industry policies, security checks, risk tracking and monitoring under one tool for a multi cloud environment.

Evolving the Maturity of your IaC

You can systematically evolve the infrastructure and quantify the maturity with Infrastructure as a code Maturity model. Gary Stafford gave a talk about infrastructure as code maturity model [56] with and the following levels:

- Level -1 Regressive: Process is unrepeatable, poorly controlled, and reactive.

- Level 0 Repeatable: Process is documented and partly automated.

- Level 1 Consistent: Process is automated and applied across the whole lifecycle.

- Level 2 Quantitatively Managed: Process is measured and controlled.

- Level 3 Optimizing: Process is optimized.

With fast, continuous automated infrastructure deployment the change management process needs to take a different approach. Scheduling change requests and writing detailed recovery plan will lose the time and speed advantage that IaC offers. The roll back option is coming from the previously working, battle tested version from the version control system. The changes need to be small with affecting a smaller scope. The modification and the modifying person can be traced back from the version control system and their commit messages while the automated security tests enforce the security baseline. Kief in his book [49] mentions two patterns for change management: continuous synchronization or immutable server change management pattern. In the first case there is a continuous apply and overwrite any differences, while the latter means complete rebuild with a change.

Further traceability sources include change history, cloud audit logs, applied tags on resources, version control system commits with signing, CI/CD pipeline jobs history and monitoring tools. Branch protection with status checks and with required signature can also improve traceability and enforce policies.

As everything is a codebase which is easy to read and interpret, with the addition of version control system commits and notes, it will also act as a documentation extending and backing up the architecture documentation by giving context and deeper understanding of choices and strategies.

It is very important to track resources and have an up-to-date inventory, because you cannot defend the environment if you do not know what resources it contains. Inventory of the resources will be provided by the code, state files, cloud providers’ dashboards, monitoring systems, visualized via diagrams and can be viewed by tags, naming conventions and project hierarchies.

Backup of the code is ensured by the multiple versions stored in the version control system. As the infrastructure is automatically deployed and idempotent and/or immutable, only the configuration settings and data require backup.

In case of time or knowledge limitation or just to get insurance from an independent party, security assessment done by third-party companies could help by showing any missed spot or show a clean sheet. This is an optional step, but it can provide confirmation and independent review on the whole picture.

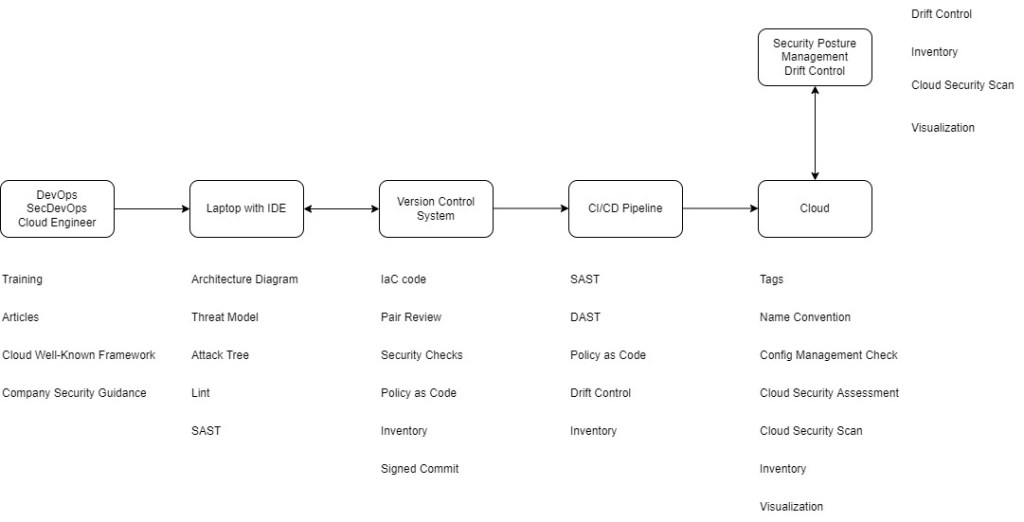

The Big Picture

As a picture worth thousand words, here you can see the big picture of the already discussed points.

Figure 1- The lifecycle of Infra as Code and security

Figure 2- Continuous Security within the lifecycle

Summary

The infrastructure that was deployed have gone through multiple security checks and approves, in compliant with company security best practices, governing policies and can be traced back who, what and when introduced into the code that had been deployed. As far as one can see after going through all the parts of developing the IaC to automatically deploy a secure infrastructure in the cloud, there is no doubt about how many places things can go wrong. If somebody dedicates themselves using IaC and rigorously execute the steps in an automated way, substantial benefits in terms of visibility and traceability can be obtained, with fast, repeatable, and secure infrastructure deployment.

References

[2] https://cloud.google.com/security/best-practices

[3] https://docs.microsoft.com/en-us/azure/architecture/framework/

[4] https://docs.microsoft.com/en-us/azure/architecture/guide/design-principles/

[5] https://docs.microsoft.com/en-us/azure/architecture/guide/

[6] https://aws.amazon.com/blogs/

[7] https://azure.microsoft.com/en-us/blog/

[8] https://cloud.google.com/blog/

[12] https://docs.microsoft.com/en-us/azure/key-vault/general/vault-create-template?tabs=CLI

[13] https://azure.microsoft.com/en-us/blog/topics/security/

[14] https://aws.amazon.com/blogs/security/

[15] https://docs.microsoft.com/en-us/previous-versions/commerce-server/ee823878(v=cs.20)?redirectedfrom=MSDN

[16] https://www.diagrams.net/

[17] https://www.microsoft.com/en-us/securityengineering/sdl/threatmodeling

[18] https://github.com/michenriksen/drawio-threatmodeling

[19] https://swagitda.com/blog/posts/deciduous-attack-tree-app/

[20] https://github.com/raesene/sycamore

[21] https://attack.mitre.org/matrices/enterprise/cloud/

[22] https://attack.mitre.org/

[23] https://center-for-threat-informed-defense.github.io/security-stack-mappings/Azure/README.html

[24] https://www.cisecurity.org/benchmark/amazon_web_services/

[25] https://www.trendmicro.com/cloudoneconformity/

[26] https://docs.bridgecrew.io/docs/aws-policy-index

[27] https://trustoncloud.com/the-last-s3-security-document-that-well-ever-need/

[28] https://www.blackhillsinfosec.com/breaching-the-cloud-perimeter-w-beau-bullock/

[29] https://www.youtube.com/watch?v=Ml09R38jpok

[30] https://www.youtube.com/watch?v=dRVFoyQxRiU

[31] https://github.com/common-fate/iamzero

[32] https://github.com/salesforce/policy_sentry

[33] https://github.com/salesforce/cloudsplaining

[34] https://github.com/nccgroup/PMapper

[35] https://github.com/FSecureLABS/awspx

[36] https://github.com/RhinoSecurityLabs/pacu

[37] https://github.com/dxa4481/gcploit

[38] https://cloud.google.com/iam/docs/recommender-overview

[39] https://github.com/bridgecrewio/checkov

[40] https://github.com/fugue/regula

[41] https://github.com/returntocorp/semgrep

[42] https://github.com/wils0ns/tfscan

[43] https://github.com/aquasecurity/tfsec

[44] https://github.com/open-policy-agent/opa

[45] https://marketplace.visualstudio.com/items?itemName=tfsec.tfsec

[46] https://github.com/terraform-linters/tflint

[47] https://docs.microsoft.com/en-us/learn/paths/tm-threat-modeling-fundamentals/

[48] https://github.com/Checkmarx/kics

[49] Kief Morris , O’Reilly: Infrastructure as Code -Dynamic Systens for the Cloud Age, https://www.oreilly.com/library/view/infrastructure-as-code/9781098114664/

[50] https://rhinosecuritylabs.com/aws/aws-privilege-escalation-methods-mitigation/

[51] https://www.netspi.com/blog/technical/cloud-penetration-testing/

[52] https://cloudseclist.com/

[53] https://docs.datadoghq.com/security_platform/default_rules/#cat-posture-management-cloud

[54] https://www.youtube.com/watch?v=5jyL-CHib54

[55] https://www.youtube.com/watch?v=DEVt1Adybvs

[56] https://www.slideshare.net/garystafford/infrastructure-as-code-maturity-model

[57] https://www.youtube.com/watch?v=VbW-X0j35gw

[60] https://stelligent.com/2018/03/23/validating-aws-cloudformation-templates-with-cfn_nag-and-mu/

[61] https://github.com/aws-cloudformation/cloudformation-guard

[62] https://cloud.google.com/resource-manager/docs/organization-policy/overview

[63] https://docs.aws.amazon.com/organizations/latest/userguide/orgs_manage_policies_scps.html

[64] https://docs.microsoft.com/en-us/azure/governance/policy/overview

[66] https://aws.amazon.com/cdk/

[68] https://aws.amazon.com/solutions/implementations/aws-perspective/

[70] https://cloud.google.com/network-intelligence-center/docs/network-topology/concepts/overview

[74] https://github.com/OpenCSPM/opencspm

[75] https://www.openraven.com/research-tools/magpie

[76] https://github.com/google/gcp_scanner

[77] https://www.ansible.com/

[78] https://www.chef.io/

[79] https://puppet.com/

[80] https://docs.chef.io/inspec/

[82] https://terratest.gruntwork.io/

[83] https://github.com/pistazie/cdk-dia

[84] https://github.com/mhlabs/cfn-diagram

[85] https://github.com/duo-labs/cloudmapper

[86] https://www.fugue.co/blog/fugue-developer-free-cloud-security-and-visualization-for-engineers

[87] https://driftctl.com/

如有侵权请联系:admin#unsafe.sh