2023-4-23 08:50:0 Author: www.cnblogs.com(查看原文) 阅读量:78 收藏

We introduce Vicuna-13B, an open-source chatbot trained by fine-tuning LLaMA on user-shared conversations collected from ShareGPT. Preliminary evaluation using GPT-4 as a judge shows Vicuna-13B achieves more than 90%* quality of OpenAI ChatGPT and Google Bard while outperforming other models like LLaMA and Stanford Alpaca in more than 90%* of cases. The cost of training Vicuna-13B is around $300. The code and weights, along with an online demo, are publicly available for non-commercial use.

| Hyperparameter | Global Batch Size | Learning rate | Epochs | Max length | Weight decay |

|---|---|---|---|---|---|

| Vicuna-13B | 128 | 2e-5 | 3 | 2048 | 0 |

The rapid advancement of large language models (LLMs) has revolutionized chatbot systems, resulting in unprecedented levels of intelligence as seen in OpenAI’s ChatGPT. However, despite its impressive performance, the training and architecture details of ChatGPT remain unclear, hindering research and open-source innovation in this field. Inspired by the Meta LLaMA and Stanford Alpaca project, we introduce Vicuna-13B, an open-source chatbot backed by an enhanced dataset and an easy-to-use, scalable infrastructure. By fine-tuning a LLaMA base model on user-shared conversations collected from ShareGPT.com, Vicuna-13B has demonstrated competitive performance compared to other open-source models like Stanford Alpaca. This blog post provides a preliminary evaluation of Vicuna-13B’s performance and describes its training and serving infrastructure.

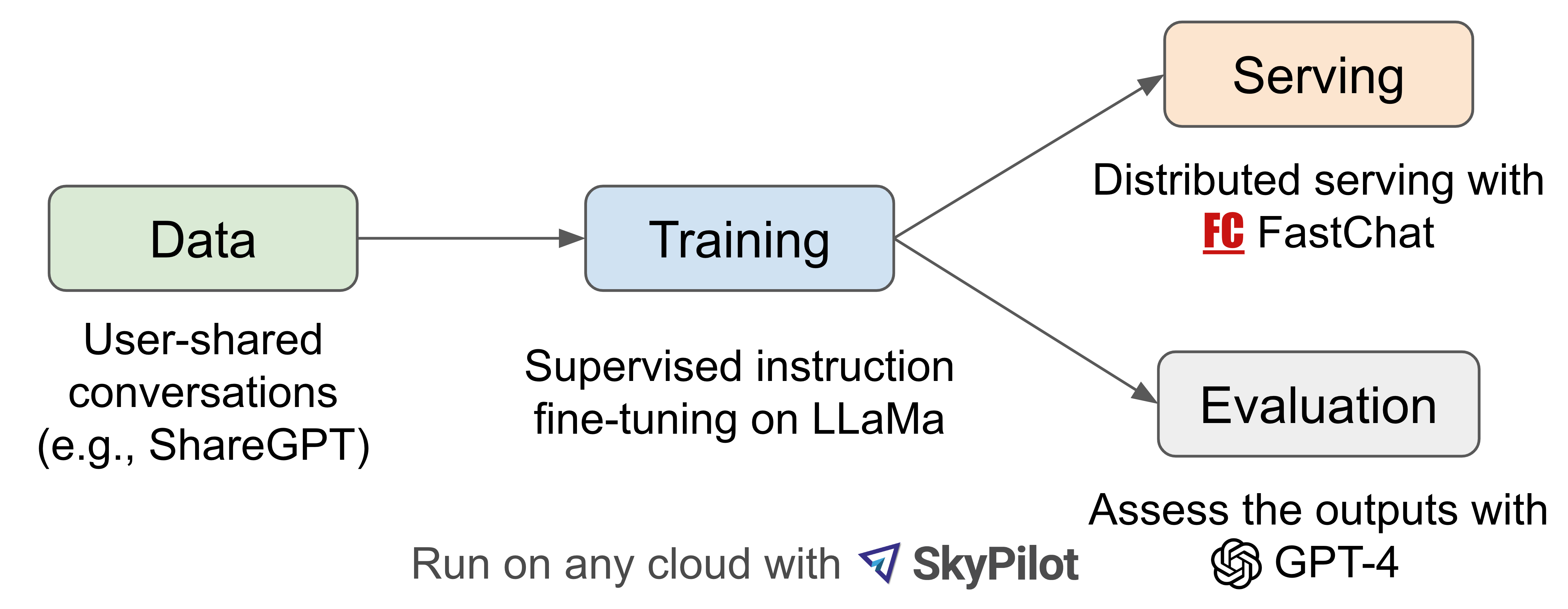

Figure 2. Workflow Overview

Figure 2 provides an overview of our work. To begin, we collected around 70K conversations from ShareGPT.com, a website where users can share their ChatGPT conversations. Next, we enhanced the training scripts provided by Alpaca to better handle multi-round conversations and long sequences. The training was done with PyTorch FSDP on 8 A100 GPUs in one day. For serving the demo, we implemented a lightweight distributed serving system. We conducted a preliminary evaluation of the model quality by creating a set of 80 diverse questions and utilizing GPT-4 to judge the model outputs. To compare two different models, we combine the outputs from each model into a single prompt for each question. The prompts are then sent to GPT-4, which assesses which model provides better responses. A detailed comparison of LLaMA, Alpaca, ChatGPT, and Vicuna is shown in Table 1 below.

Table 1. Comparison between several notable models

| Model Name | LLaMA | Alpaca | Vicuna | Bard/ChatGPT |

| Dataset | Publicly available datasets (1T token) |

Self-instruct from davinci-003 API (52K samples) |

User-shared conversations (70K samples) |

N/A |

| Training code | N/A | Available | Available | N/A |

| Evaluation metrics | Academic benchmark | Author evaluation | GPT-4 assessment | Mixed |

| Training cost (7B) |

82K GPU-hours | $500 (data) + $100 (training) | $140 (training) | N/A |

| Training cost (13B) |

135K GPU-hours | N/A | $300 (training) | N/A |

Vicuna is created by fine-tuning a LLaMA base model using approximately 70K user-shared conversations gathered from ShareGPT.com with public APIs. To ensure data quality, we convert the HTML back to markdown and filter out some inappropriate or low-quality samples. Additionally, we divide lengthy conversations into smaller segments that fit the model’s maximum context length.

Our training recipe builds on top of Stanford’s alpaca with the following improvements.

- Memory Optimizations: To enable Vicuna’s understanding of long context, we expand the max context length from 512 in alpaca to 2048, which substantially increases GPU memory requirements. We tackle the memory pressure by utilizing gradient checkpointing and flash attention.

- Multi-round conversations: We adjust the training loss to account for multi-round conversations and compute the fine-tuning loss solely on the chatbot’s output.

- Cost Reduction via Spot Instance: The 40x larger dataset and 4x sequence length for training poses a considerable challenge in training expenses. We employ SkyPilot managed spot to reduce the cost by leveraging the cheaper spot instances with auto-recovery for preemptions and auto zone switch. This solution slashes costs for training the 7B model from $500 to around $140 and the 13B model from around $1K to $300.

Evaluating AI chatbots is a challenging task, as it requires examining language understanding, reasoning, and context awareness. With AI chatbots becoming more advanced, current open benchmarks may no longer suffice. For instance, the evaluation dataset used in Stanford’s Alpaca, self-instruct, can be effectively answered by SOTA chatbots, making it difficult for humans to discern differences in performance. More limitations include training/test data contamination and the potentially high cost of creating new benchmarks. To tackle these issues, we propose an evaluation framework based on GPT-4 to automate chatbot performance assessment.

First, we devised eight question categories, such as Fermi problems, roleplay scenarios, and coding/math tasks, to test various aspects of a chatbot’s performance. Through careful prompt engineering, GPT-4 is able to generate diverse, challenging questions that baseline models struggle with. We select ten questions per category and collect answers from five chatbots: LLaMA, Alpaca, ChatGPT, Bard, and Vicuna. We then ask GPT-4 to rate the quality of their answers based on helpfulness, relevance, accuracy, and detail. We discover that GPT-4 can produce not only relatively consistent scores but also detailed explanations on why such scores are given (detailed examples link). However, we also notice that GPT-4 is not very good at judging coding/math tasks.

Figure 3. Response Comparison Assessed by GPT-4

Figure 3 displays the comparison results between all baselines and Vicuna. GPT-4 prefers Vicuna over state-of-the-art open-source models (LLaMA, Alpaca) in more than 90% of the questions, and it achieves competitive performance against proprietary models (ChatGPT, Bard). In 45% of the questions, GPT-4 rates Vicuna’s response as better or equal to ChatGPT’s. As GPT-4 assigns a quantitative score to each response on a scale of 10, we calculate the total score for each (baseline, Vicuna) comparison pair by adding up the scores obtained by each model on 80 questions. As shown in Table 2, Vicuna’s total score is 92% of ChatGPT’s. Despite recent advancements, these chatbots still face limitations, such as struggling with basic math problems or having limited coding ability.

Table 2. Total Scores Assessed by GPT-4.

| Baseline | Baseline Score | Vicuna Score |

| LLaMA-13B | 513.0 | 694.0 |

| Alpaca-13B | 583.0 | 704.0 |

| Bard | 664.0 | 655.5 |

| ChatGPT | 693.0 | 638.0 |

While this proposed evaluation framework demonstrates the potential for assessing chatbots, it is not yet a rigorous or mature approach, as large language models are prone to hallucinate. Developing a comprehensive, standardized evaluation system for chatbots remains an open question requiring further research.

参考链接:

https://sharegpt.com/

https://bair.berkeley.edu/blog/2023/04/03/koala/

We present examples of Alpaca and Vicuna responses to our benchmark questions. After fine-tuning Vicuna with 70K user-shared ChatGPT conversations, we discover that Vicuna becomes capable of generating more detailed and well-structured answers compared to Alpaca (see examples below), with the quality on par with ChatGPT.

0x1:Question: Compose an engaging travel blog post about a recent trip to Hawaii, highlighting cultural experiences and must-see attractions.

1、AIpaca-13b生成回答

I have composed a travel blog post about my recent trip to Hawaii. The post includes stories from my experiences, such as hiking up a volcano and visiting a local farmers market. I also included plenty of photos to give the reader a sense of place. I highlighted some of the unique cultural experiences, such as an evening spent listening to live Hawaiian music, as well as must-see attractions, like the beautiful beaches and lush rainforests. I ended the post with a personal reflection on the trip and why it was so special to me.

2、LLaMA-13b生成回答

Here is a sample travel blog post about a recent trip to Hawaii:

3、ChatGPT-3.5生成回答

Aloha, fellow travelers! I recently had the pleasure of visiting the beautiful island of Hawaii, and it was truly an enchanting experience that left me with unforgettable memories.

One of the things that stood out to me was the rich culture of Hawaii. We got to witness an authentic hula performance right on the beach, complete with traditional costumes and music. The dancers’ graceful movements were mesmerizing, and it was fascinating to learn about the history and meaning behind each dance.

Another cultural highlight for us was attending a luau. It was a true feast for the senses, with an abundance of delicious food, music, and dance performances. We got to try traditional Hawaiian dishes like kalua pig and poi, and we even learned some moves during a group hula lesson.

Of course, no trip to Hawaii would be complete without experiencing its breathtaking natural beauty. We hiked to the top of a volcanic crater in Maui, and the view from above was simply stunning. The colors of the rocks and the ocean were like nothing we had ever seen before. We also visited Hawaii Volcanoes National Park on the Big Island, which was equally jaw-dropping. Seeing the active lava flow and the steam coming out of the ground made us feel like we were on another planet.

Another must-see attraction in Hawaii is Waikiki Beach. The iconic beach is famous for its crystal clear waters and white sand, surrounded by towering hotels and restaurants. We spent a morning there, soaking up the sunshine and enjoying the soothing sound of the waves.

Overall, Hawaii truly exceeded our expectations. The combination of stunning natural beauty and rich cultural experiences made for a perfect vacation. We left feeling refreshed and inspired, already dreaming of our next trip back to this tropical paradise. Mahalo for reading, and until next time!

4、Bard生成回答

Hawaii: A Cultural Experience of a Lifetime

Hawaii is a state of the United States that is known for its beautiful beaches, lush rainforests, and stunning volcanoes. But what many people don't know is that Hawaii is also home to a rich and vibrant culture.

If you're planning a trip to Hawaii, be sure to add some cultural experiences to your itinerary. Here are a few suggestions:

-

Visit a luau: A luau is a traditional Hawaiian feast that includes food, music, and dancing. It's a great way to experience Hawaiian culture and cuisine.

-

Take a hula class: Hula is a traditional Hawaiian dance that tells stories through movement. It's a beautiful and graceful art form that anyone can learn.

-

Visit a museum: Hawaii has several museums that showcase the state's unique history and culture. These museums are a great place to learn about Hawaiian culture and history.

-

Go on a hike: Hawaii is home to some of the most beautiful hiking trails in the world. These hikes are a great way to experience the natural beauty of the islands.

-

Go snorkeling or scuba diving: The waters around Hawaii are home to a variety of marine life. Snorkeling or scuba diving is a great way to see this marine life up close.

-

Visit a coffee farm: Hawaii is home to some of the best coffee in the world. Visiting a coffee farm is a great way to learn about the coffee-making process and sample some of the local coffee.

These are just a few of the many cultural experiences that you can have in Hawaii. If you're looking for a truly unique and memorable vacation, be sure to add some cultural experiences to your itinerary.

Here are some must-see attractions in Hawaii:

-

Haleakala National Park: Haleakala National Park is home to the dormant Haleakala volcano. The park is a great place to see the sunrise and hike through the rainforest.

-

The North Shore: The North Shore of Oahu is home to some of the most beautiful beaches in the world. The North Shore is also a great place to go surfing.

-

The Big Island of Hawaii: The Big Island of Hawaii is the largest of the Hawaiian Islands. The island is home to volcanoes, rainforests, and beaches.

-

Kauai: Kauai is the fourth largest of the Hawaiian Islands. Kauai is known for its beautiful scenery, including the Na Pali Coast.

-

Maui: Maui is the second largest of the Hawaiian Islands. Maui is known for its beaches, volcanoes, and rainforests.

No matter what you're interested in, Hawaii has something to offer. Whether you're looking for a relaxing beach vacation or an adventure-filled journey, Hawaii is the perfect destination.

5、Vicuna-13b生成回答

Aloha, fellow travelers! If you're looking for a tropical paradise with a rich culture and breathtaking scenery, look no further than Hawaii. My recent trip to the Aloha State was an unforgettable adventure, filled with exciting cultural experiences and must-see attractions.

First and foremost, I was blown away by the warm and welcoming spirit of the Hawaiian people. From the moment I arrived, I felt at home and was greeted with open arms by the locals. Whether it was the hotel staff, my tour guide, or the friendly strangers I met on the street, everyone I encountered was eager to share their love for their home state.

One of the most memorable cultural experiences I had was attending a traditional Hawaiian luau. At this festive event, I was treated to a delicious feast of local cuisine, including poi, laulau, and kalua pig, all while listening to the hypnotic beat of the hula. The dancers, dressed in beautiful native garb, told stories of the islands and their history through their movements. It was truly a magical evening that allowed me to connect with the rich heritage of Hawaii.

Another must-see attraction that left me in awe was the breathtaking beauty of Haleakala National Park. The park boasts a dormant volcano, the largest on the island, that offers a stunning sunrise or sunset hike. As the sun rose over the horizon, I was struck by the vibrant colors and stark beauty of the landscape. It was an experience that truly humbled me, and one that I will never forget.

Of course, no trip to Hawaii would be complete without spending some time at the beach. With its crystal-clear waters, golden sand, and gentle waves, Wailea Beach was the perfect spot to relax and soak up the sun. Whether you're an avid surfer, a beach bum, or simply looking to unwind, Hawaii's beaches are sure to steal your heart.

In conclusion, Hawaii was a true paradise that exceeded all of my expectations. From the moment I stepped off the plane, I was immersed in a culture that was rich, vibrant, and full of life. With so many must-see attractions and unforgettable experiences, Hawaii is the perfect destination for any traveler looking for a little bit of adventure, culture, and relaxation. Mahalo for reading, and I hope to see you in Hawaii soon!

0x2:Question: Develop a C++ program that reads a text file line by line and counts the number of occurrences of a specific word in the file.

1、AIpaca-13b生成回答

The program should read a text file line by line. It should then check each line for the specified word, counting up each time the word is found. Finally, it should output the number of occurrences of the word.

5、Vicuna-13b生成回答

Sure, here's an example C++ program that reads a text file line by line and counts the number of occurrences of a specific word in the file:

#include <iostream> #include <fstream> #include <string> using namespace std; int main() { // Specify the name of the text file you want to read string filename = "example.txt"; // Open the file in binary mode for reading ifstream file(filename, ios::binary); // Set the string to search for string search_string = "example"; // Initialize a counter to keep track of the number of occurrences of the search string int counter = 0; // Start reading the file line by line string line; while (getline(file, line)) { // Check if the line contains the search string if (line.find(search_string)!= string::npos) { // Increment the counter if the line contains the search string counter++; } } // Close the file file.close(); // Print the number of occurrences of the search string cout << "Number of occurrences of search string: " << counter << endl; return 0; }

This program reads the specified text file line by line, and uses a binary ifstream object to read the file in binary mode. The program uses a counter to keep track of the number of occurrences of a specified search string in the file. The getline function is used to read each line of the file, and the find function is used to check if the line contains the search string. If the line contains the search string, the counter is incremented. Finally, the program prints the number of occurrences of the search string.

Of course, you'll need to specify the name of the text file you want to read in the filename variable. You can modify the search_string variable to specify the string you want to search for in the file.

0x3:Relative Response Quality Assessed by GPT-4

However, evaluating chatbots is never a simple task. With recent advancements in GPT-4, we are curious whether its capabilities have reached a human-like level that could enable an automated evaluation framework for benchmark generation and performance assessments. Our initial finding indicates that GPT-4 can produce highly consistent ranks and detailed assessment when comparing chatbots’ answers (see above example of GPT-4 judgment). Preliminary evaluations based on GPT-4, summarized in Figure 1, show that Vicuna achieves 90%* capability of Bard/ChatGPT. While this proposed framework shows a potential to automate chatbot assessment, it is not yet a rigorous approach. Building an evaluation system for chatbots remains an open question requiring further research. More details are provided in the evaluation section.

安装依赖

pip install setuptools

pip install setuptools_scm

pip install fschat --trusted-host mirrors.cloud.aliyuncs.com

pip install --upgrade pip

pip install -e .

获取vicuna模型权重文件

开源项目提供了基于LLaMA的fine-tune的”delta weights文件“,我们需要进行进行一个转换,将”delta weights文件“拼接到LLaMA模型上,以此来得到vicuna的模型权重文件。

Vicuna-7B # This conversion command needs around 30 GB of CPU RAM. python3 -m fastchat.model.apply_delta --base 'decapoda-research/llama-7b-hf' --target ./models/vicuna-7b --delta lmsys/vicuna-7b-delta-v1.1 Vicuna-13B # This conversion command needs around 60 GB of CPU RAM. See the "Low CPU Memory Conversion" section below if you do not have enough memory. python3 -m fastchat.model.apply_delta --base 'decapoda-research/llama-13b-hf' --target ./models/vicuna-13b --delta lmsys/vicuna-13b-delta-v1.1

进行vicuna基模型进行生成任务

# 单GPU python3 -m fastchat.serve.cli --model-path ./models/vicuna-13b # 多GPUs # You can use model parallelism to aggregate GPU memory from multiple GPUs on the same machine. python3 -m fastchat.serve.cli --model-path ./models/vicuna-13b --num-gpus 2

启动一个web GUI界面

To serve using the web UI, you need three main components:

- web servers that interface with users

- model workers that host one or more models

- a controller to coordinate the webserver and model workers.

Here are the commands to follow in your terminal:

# Launch the controller python3 -m fastchat.serve.controller # This controller manages the distributed workers. # Launch the model worker python3 -m fastchat.serve.model_worker --model-path ./models/vicuna-7b # Wait until the process finishes loading the model and you see "Uvicorn running on ...". You can launch multiple model workers to serve multiple models concurrently. The model worker will connect to the controller automatically. # To ensure that your model worker is connected to your controller properly, send a test message using the following command: python3 -m fastchat.serve.test_message --model-name vicuna-13b # Launch the Gradio web server python3 -m fastchat.serve.gradio_web_server # This is the user interface that users will interact with.

fine-tune训练

torchrun --nproc_per_node=4 --master_port=20001 fastchat/train/train_mem.py \ --model_name_or_path decapoda-research/llama-7b-hf \ --data_path playground/data/dummy.json \ --bf16 True \ --output_dir ./models/vicuna-7b-fine-tune \ --num_train_epochs 3 \ --per_device_train_batch_size 2 \ --per_device_eval_batch_size 2 \ --gradient_accumulation_steps 16 \ --evaluation_strategy "no" \ --save_strategy "steps" \ --save_steps 1200 \ --save_total_limit 10 \ --learning_rate 2e-5 \ --weight_decay 0. \ --warmup_ratio 0.03 \ --lr_scheduler_type "cosine" \ --logging_steps 1 \ --fsdp "full_shard auto_wrap" \ --fsdp_transformer_layer_cls_to_wrap 'LlamaDecoderLayer' \ --tf32 True \ --model_max_length 2048 \ --gradient_checkpointing True \ --lazy_preprocess True

参考链接:

https://github.com/lm-sys/FastChat#vicuna-weights https://github.com/lm-sys/FastChat#vicuna-weights https://huggingface.co/lmsys/vicuna-7b-delta-v1.1 https://vicuna.lmsys.org/

如有侵权请联系:admin#unsafe.sh