netlink能实时获取网络连接五元组信息,可以用于网络活动可视化、异常连接检测、安全策略优化以及审计等功能,但网络上找到的相关文章不多,因此在此分析下netlink实时获取网络连接信息的原理。netlink是什么?官方文档是这样描述的:Netlink is used to transfer information between the kernel and user-space processes. It consists of a standard sockets-based interface for user space processes and an internal kernel API for kernel modules.

Netlink用于在内核和用户空间进程之间传输信息。它由一个面向用户空间进程的标准套接字接口和一个面向内核模块的内部内核API组成。

https://man7.org/linux/man-pages/man7/netlink.7.html

netlink实现了一套用户态和内核态通信的机制,通过netlink能和很多内核模块通信,例如ss命令通过 netlink 更快的获取网络信息、hids通过netlink获取进程创建信息、iptables通过netlink配置网络规则等。netlink官方提供了如下使用样例:...

fd = socket(AF_NETLINK, SOCK_RAW, protocol);

bind(fd, (struct sockaddr *) &sa, sizeof(sa));

...

sendmsg(fd, &msg, 0);

...

len = recvmsg(fd, &msg, 0);

...

socket()的参数protocol指定了创建的套接字对应的netlink协议,不同的协议可以和不同的内核模块通信,netlink支持的协议可以在include/linux/netlink.h文件中看到:// include/linux/netlink.h

#define NETLINK_INET_DIAG 4 /* INET socket monitoring*/

#define NETLINK_AUDIT 9 /* auditing */

#define NETLINK_CONNECTOR 11

#define NETLINK_NETFILTER 12 /* netfilter subsystem */

NETLINK_INET_DIAG可以获取网络连接数据,通过NETLINK_AUDIT可以获取内核auditd数据,通过NETLINK_CONNECTOR可以获取进程创建数据。而NETLINK_NETFILTER正是本文后续要介绍的能实时获取网络连接信息的协议。socket()指定AF_NETLINK之后就能和netlink通信了? 需要结合netlink的初始化过程来看。// net/netlink/af_netlink.c

core_initcall(netlink_proto_init);

core_initcall将netlink_proto_init注册为一个核心初始化函数,并在内核启动时自动调用,也就是说netlink内核模块是默认开启的。我们再来看看netlink_proto_init做了什么:// net/netlink/af_netlink.c

static int __init netlink_proto_init(void)

{

...

nl_table = kcalloc(MAX_LINKS, sizeof(*nl_table), GFP_KERNEL);

...

sock_register(&netlink_family_ops);

}struct netlink_table {

struct nl_pid_hash hash; // 存储sk_node 单播时会用

struct hlist_head mc_list; // 存储sk_bind_node 广播时会用

unsigned long *listeners; // 记录当前加入广播组的客户端

unsigned int nl_nonroot;

unsigned int groups; // 广播上限

struct mutex *cb_mutex;

struct module *module;

int registered; // 当前netlink协议是否注册

};

static struct netlink_table *nl_table;

netlink_proto_init过程需要关注两点:nl_table和sock_register:nl_table记录了netlink协议的配置和状态,我们后续讲到注册netlink时会再用到nl_table;sock_register则是将netlink_family_ops加入net_families中:// net/netlink/af_netlink.c

static struct net_proto_family netlink_family_ops = {

.family = PF_NETLINK,

.create = netlink_create,

.owner = THIS_MODULE, /* for consistency 8) */

};// net/socket.c

int sock_register(const struct net_proto_family *ops)

{

...

net_families[ops->family] = ops;

...

}static const struct net_proto_family *net_families[NPROTO] __read_mostly;

struct net_proto_family {

int family;

int (*create)(struct net *net, struct socket *sock, int protocol);

struct module *owner;

};

net_families是net_proto_family类型的数据,此时net_families[PF_NETLINK]->create == netlink_create,到此也就完成了模块初始化过程。socket时会调用net_families中的netlink_create:// net/socket.c

SYSCALL_DEFINE3(socket, int, family, int, type, int, protocol)

{

...

retval = sock_create(family, type, protocol, &sock);

...

}int sock_create(int family, int type, int protocol, struct socket **res)

{

return __sock_create(current->nsproxy->net_ns, family, type, protocol, res, 0);

}static int __sock_create(struct net *net, ...)

{

...

pf = rcu_dereference(net_families[family]);

err = pf->create(net, sock, protocol);

...

}

socket最终会调用net_families[family]->create,而官方样例中我们是这样fd = socket(AF_NETLINK, SOCK_RAW, netlink_family)创建 netlink socket的,include/linux/socket.h文件中定义#define PF_NETLINK AF_NETLINK,因此net_families[family]->create调用的正是netlink_create!我们再来看看netlink_create做了什么:// net/netlink/af_netlink.c

static int netlink_create(struct net *net, struct socket *sock, int protocol)

{

...

err = __netlink_create(net, sock, cb_mutex, protocol);

...

}static int __netlink_create(struct net *net, ...)

{

...

sock->ops = &netlink_ops;

// 将sk与netlink_proto结构体关联

sk = sk_alloc(net, PF_NETLINK, GFP_KERNEL, &netlink_proto);

...

}// net/netlink/af_netlink.c

// netlink_socket 对应的 ops 函数

static const struct proto_ops netlink_ops = {

...

.bind = netlink_bind,

.setsockopt = netlink_setsockopt,

.sendmsg = netlink_sendmsg,

.recvmsg = netlink_recvmsg,

...

};

// netlink类型socket的sock结构体

struct netlink_sock {

struct sock sk;

u32 pid;

u32 dst_pid;

u32 dst_group;

u32 flags;

u32 subscriptions;

u32 ngroups;

unsigned long *groups;

unsigned long state;

wait_queue_head_t wait;

struct netlink_callback *cb;

struct mutex *cb_mutex;

struct mutex cb_def_mutex;

void (*netlink_rcv)(struct sk_buff *skb);

struct module *module;

};

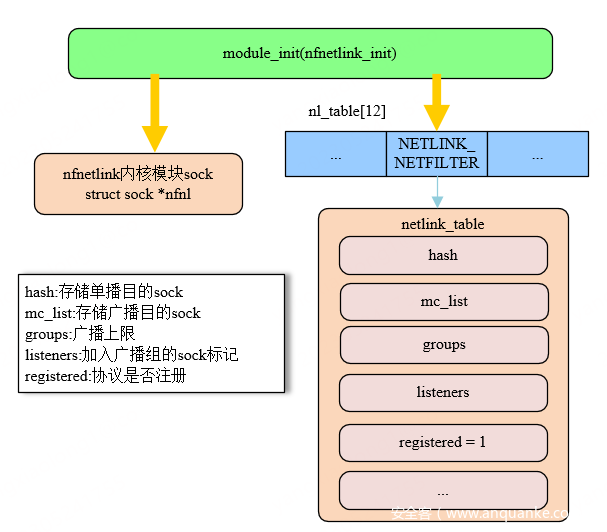

netlink_create进行了sock->ops = &netlink_ops赋值操作,也就是说我们可以通过socket(AF_NETLINK, SOCK_RAW, netlink_family)获得一个socket,此时该socket对应的bind sendmsg recvmsg等函数都被替换成了netlink协议的对应函数。同时创建了socket->sk与netlink_sock结构体关联,netlink_sock中会存放netlink通信过程中需要的dst_pid、dst_group等数据。netlink协议的流程基本相同,本节以后续要分析的NETLINK_NETFILTER协议为例分析一下注册netlink协议的流程:// net/netfilter/nfnetlink.c

static int __init nfnetlink_init(void)

{

...

nfnl = netlink_kernel_create(&init_net, NETLINK_NETFILTER, NFNLGRP_MAX,

nfnetlink_rcv, NULL, THIS_MODULE);

...

}module_init(nfnetlink_init);

netfilter并不是默认开启的内核模块,当该模块被加载时会调用netlink_kernel_create// net/netfilter/nfnetlink.c

struct sock *

netlink_kernel_create(struct net *net, int unit, unsigned int groups,

void (*input)(struct sk_buff *skb),...)

{

...

struct socket *sock;

struct sock *sk;

unsigned long *listeners = NULL;

// 为内核模块创建socket

sock_create_lite(PF_NETLINK, SOCK_DGRAM, unit, &sock)

__netlink_create(&init_net, sock, cb_mutex, unit)

sk = sock->sk;

listeners = kzalloc(NLGRPSZ(groups) + sizeof(struct listeners_rcu_head),

GFP_KERNEL);

// 设置回调函数 netlink_rcv 为 nfnetlink_rcv

if (input)

nlk_sk(sk)->netlink_rcv = input;

// 更新协议状态

nl_table[unit].groups = groups;

nl_table[unit].listeners = listeners;

nl_table[unit].cb_mutex = cb_mutex;

nl_table[unit].module = module;

nl_table[unit].registered = 1;

...

}

netlink_kernel_create返回给netfilter内核模块一个socket,并切设置了该socket的netlink_rcv函数为nfnetlink_rcv,最后还更新了nl_table设置NETLINK_NETFILTER协议为激活状态。send向内核态发送netlink消息,通过分析这个过程我们可以看到nfnetlink_rcv和nl_table的作用:// net/socket.c

SYSCALL_DEFINE4(send, int, fd, ...)

{

return sys_sendto(fd, buff, len, flags, NULL, 0);

}SYSCALL_DEFINE6(sendto, int, fd,...)

{

...

err = sock_sendmsg(sock, &msg, len);

...

}int sock_sendmsg(struct socket *sock, struct msghdr *msg, size_t size)

{

...

ret = __sock_sendmsg(&iocb, sock, msg, size);

...

}static inline int __sock_sendmsg(struct kiocb *iocb,...)

{

...

return sock->ops->sendmsg(iocb, sock, msg, size);

}

send最终调用的是sock->ops->sendmsg也就是netlink_sendmsg// net/netlink/af_netlink.c

static int netlink_sendmsg(struct kiocb *kiocb,...)

{

...

NETLINK_CB(skb).dst_group = dst_group;

if (dst_group) {

atomic_inc(&skb->users);

netlink_broadcast(sk, skb, dst_pid, dst_group, GFP_KERNEL);

}

err = netlink_unicast(sk, skb, dst_pid, msg->msg_flags&MSG_DONTWAIT);

...

}

netlink_sendmsg会根据dst_group选择执行netlink_broadcast和netlink_unicast,netlink正是用这两个函数将数据单播、广播给发送给指定的进程或内核模块。用户态发送给内核进程的消息自然用的是单播netlink_unicast:// net/netlink/af_netlink.c

int netlink_unicast(struct sock *ssk, ...)

{

...

if (netlink_is_kernel(sk))

return netlink_unicast_kernel(sk, skb);

err = netlink_attachskb(sk, skb, &timeo, ssk);

return netlink_sendskb(sk, skb);

}

// 发送消息到内核

static inline int netlink_unicast_kernel(struct sock *sk, struct sk_buff *skb)

{

...

struct netlink_sock *nlk = nlk_sk(sk);

nlk->netlink_rcv(skb);

...

}

netlink_unicast单播时会通过netlink_is_kernel判断消息是否要发往内核,如果消息需要发送到内核则会调用内核模块初始化时注册的input函数nfnetlink_rcv,至此完成从用户态发送消息到指定内核模块的流程。netlink_unicast netlink_broadcast函数:// net/netlink/af_netlink.c

int netlink_sendskb(struct sock *sk, struct sk_buff *skb)

{

...

skb_queue_tail(&sk->sk_receive_queue, skb);

...

}static inline int netlink_broadcast_deliver(struct sock *sk,

struct sk_buff *skb)

{

...

skb_queue_tail(&sk->sk_receive_queue, skb);

...

}

netlink_unicast和netlink_broadcast最终都会调用skb_queue_tail将数据写入sk->sk_receive_queue队列中。recvmsg接收来自内核的消息:// net/socket.c

SYSCALL_DEFINE3(recvmsg, int, fd, struct msghdr __user *, msg,

unsigned int, flags)

{

...

err = sock_recvmsg(sock, &msg_sys, total_len, flags);

...

}int sock_recvmsg(struct socket *sock, struct msghdr *msg,

size_t size, int flags)

{

...

ret = __sock_recvmsg(&iocb, sock, msg, size, flags);

...

}static inline int __sock_recvmsg(struct kiocb *iocb, ...)

{

...

return sock->ops->recvmsg(iocb, sock, msg, size, flags);

}

recvmsg最终调用的还是sock->ops->recvmsg即netlink_rcvmsg:// net/netlink/af_netlink.c

static int netlink_recvmsg(struct kiocb *kiocb,...)

{

...

struct sock *sk = sock->sk;

skb = skb_recv_datagram(sk, flags, noblock, &err);

err = skb_copy_datagram_iovec(skb, 0, msg->msg_iov, copied);

...

}

// net/core/datagram.c

struct sk_buff *__skb_recv_datagram(struct sock *sk, ...)

{

...

skb = skb_peek(&sk->sk_receive_queue);

...

}

netlink消息的流程非常简单,就是从socket->sk->sk_receive_queue中拷贝消息到指定的msghdr->msg_iov中。netfilter 是一个内核框架,用于在 Linux 系统中进行网络数据包过滤、修改和网络地址转换等操作。为了实现这些功能netfilter在一些位置埋了钩子,例如在 IP 层的入口函数ip_rcv中,netfilter通过NF_HOOK处理数据包:// net/ipv4/ip_input.c

int ip_rcv(struct sk_buff *skb, struct net_device *dev, struct packet_type *pt, struct net_device *orig_dev)

{

...

return NF_HOOK(PF_INET, NF_INET_PRE_ROUTING, skb, dev, NULL,

ip_rcv_finish);

}

// net/netfilter/core.c

int nf_hook_slow(u_int8_t pf, unsigned int hook, struct sk_buff *skb,...)

{

...

elem = &nf_hooks[pf][hook];

verdict = nf_iterate(&nf_hooks[pf][hook], skb, hook, indev,

outdev, &elem, okfn, hook_thresh);

...

}unsigned int nf_iterate(struct list_head *head,...)

{

...

list_for_each_continue_rcu(*i, head) {

struct nf_hook_ops *elem = (struct nf_hook_ops *)*i;

verdict = elem->hook(hook, skb, indev, outdev, okfn);

}

}

NF_HOOK最终会进入nf_iterate遍历执行nf_hooks[pf][hook]中的hook,我们来看一下nf_hooks是怎么被初始化的:// net/ipv4/netfilter/nf_conntrack_l3proto_ipv4.c

static int __init nf_conntrack_l3proto_ipv4_init(void)

{

...

ret = nf_register_hooks(ipv4_conntrack_ops,ARRAY_SIZE(ipv4_conntrack_ops));

...

}

// net/netfilter/core.c

int nf_register_hooks(struct nf_hook_ops *reg, unsigned int n)

{

...

for (i = 0; i < n; i++) {

err = nf_register_hook(®[i]);

}

...

}

nf_conntrack_l3proto_ipv4内核模块实现了IPV4的连接追踪功能,可以看到其将ipv4_conntrack_ops注册到nf_hooks中,再来看一下ipv4_conntrack_ops做了什么:// net/ipv4/netfilter/nf_conntrack_l3proto_ipv4.c

static struct nf_hook_ops ipv4_conntrack_ops[] __read_mostly = {

...

{

.hook = ipv4_confirm,

.owner = THIS_MODULE,

.pf = NFPROTO_IPV4,

.hooknum = NF_INET_LOCAL_IN,

.priority = NF_IP_PRI_CONNTRACK_CONFIRM,

},

};static unsigned int ipv4_confirm(unsigned int hooknum,...)

{

...

return nf_conntrack_confirm(skb);

}

// include/net/netfilter/nf_conntrack_core.h

static inline int nf_conntrack_confirm(struct sk_buff *skb)

{

...

nf_ct_deliver_cached_events(ct);

...

}

// net/netfilter/nf_conntrack_ecache.c

void nf_ct_deliver_cached_events(struct nf_conn *ct)

{

...

notify = rcu_dereference(nf_conntrack_event_cb);

ret = notify->fcn(events | missed, &item);

...

}

NF_HOOK最终会通过nf_hooks执行nf_conntrack_event_cb->fcn,nf_conntrack_event_cb 是一个全局指针,其中存储了网络连接跟踪事件的回调函数// net/netfilter/nf_conntrack_netlink.c

static int __init ctnetlink_init(void)

{

...

ret = nf_conntrack_register_notifier(&ctnl_notifier);

...

}

// net/netfilter/nf_conntrack_ecache.c

int nf_conntrack_register_notifier(struct nf_ct_event_notifier *new)

{

...

rcu_assign_pointer(nf_conntrack_event_cb, new);

...

}

nf_conntrack_event_cb 在net/netfilter/nf_conntrack_netlink.c中完成了注册指向了ctnl_notifier:// net/netfilter/nf_conntrack_netlink.c

static struct nf_ct_event_notifier ctnl_notifier = {

.fcn = ctnetlink_conntrack_event,

};static int

ctnetlink_conntrack_event(unsigned int events, struct nf_ct_event *item)

{

...

// 不同类型的event会发送到不同的group

if (events & (1 << IPCT_DESTROY)) {

type = IPCTNL_MSG_CT_DELETE;

group = NFNLGRP_CONNTRACK_DESTROY;

} else if (events & ((1 << IPCT_NEW) | (1 << IPCT_RELATED))) {

type = IPCTNL_MSG_CT_NEW;

flags = NLM_F_CREATE|NLM_F_EXCL;

group = NFNLGRP_CONNTRACK_NEW;

}

ctnetlink_dump_master(skb, ct)

// netlink_broadcast

err = nfnetlink_send(skb, item->pid, group, item->report, GFP_ATOMIC);

...

}

// item->ct->tuplehash->tuple

// 五元组数据

struct nf_conntrack_tuple

{

struct nf_conntrack_man src;

struct {

union nf_inet_addr u3;

union {

__be16 all;

struct {

__be16 port;

} tcp;

...

} u;

} dst;

};

nf_conntrack_event_cb->fcn会执行到ctnl_notifier.fcn也就是ctnetlink_conntrack_event,ctnetlink_conntrack_event会通过ctnetlink_dump_xxx系列函数将item->ct的数据拷贝至skb中,最后通过nfnetlink_send发送数据到指定的组。NETLINK_NETFILTER的NFNLGRP_CONNTRACK_NEW组就能实时获取新网路连接信息了。可以使用setsockopt加入指定的组:setsockopt(fd, SOL_NETLINK, NETLINK_ADD_MEMBERSHIP, &group, sizeof(group))// net/socket.c

SYSCALL_DEFINE5(setsockopt, int, fd, ...)

{

...

err = sock->ops->setsockopt(sock, level, optname, optval, optlen);

...

}

// net/netlink/af_netlink.c

static int netlink_setsockopt(struct socket *sock, ...)

{

...

switch (optname){

case NETLINK_ADD_MEMBERSHIP:

case NETLINK_DROP_MEMBERSHIP: {

netlink_update_socket_mc(nlk, val,

optname == NETLINK_ADD_MEMBERSHIP);

}

}

...

}static void netlink_update_socket_mc(struct netlink_sock *nlk,...)

{

int old, new = !!is_new, subscriptions;

old = test_bit(group - 1, nlk->groups);

subscriptions = nlk->subscriptions - old + new;

if (new)

__set_bit(group - 1, nlk->groups);

else

__clear_bit(group - 1, nlk->groups);

netlink_update_subscriptions(&nlk->sk, subscriptions);

netlink_update_listeners(&nlk->sk);

}

setsockopt即可调用netlink_setsockopt将socket加入指定的组用于接收来自指定内核模块的数据。netfilter conntrack本身是一种成熟的网络连接状态信息跟踪方案,其功能正符合“新建网络连接监控”这一场景,使其在性能、实时性、兼容性方面都有不错的表现。可以通过conntrack-tools快速体验一下这个监控方案:yum install conntrack-tools -y

conntrack -E -e NEW

netlink获取实时网络连接信息,有的不行。分析源码不难发现netlink模块是通过core_initcall(netlink_proto_init)默认加载的,而netfilter的相关模块是不会默认加载的。通过Makefile也可以看到nf_conntrack_ipv4内核模块中包含了我们需要使用的nf_conntrack_l3proto_ipv4:# net/ipv4/netfilter/Makefile

nf_conntrack_ipv4-objs := nf_conntrack_l3proto_ipv4.o nf_conntrack_proto_icmp.o

obj-$(CONFIG_NF_CONNTRACK_IPV4) += nf_conntrack_ipv4.o

...

netlink获取实时网络连接信息需要加载这几个内核模块:nfnetlink nf_conntrack nf_defrag_ipv4 nf_conntrack_netlink nf_conntrack_ipv4,使用modprobe命令加载nf_conntrack_netlink nf_conntrack_ipv4即可自动加载其他依赖模块。https://github.com/torvalds/linux

https://upload.wikimedia.org/wikipedia/commons/3/37/Netfilter-packet-flow.svg

https://zhuanlan.zhihu.com/p/567556545

https://github.com/ti-mo/netfilter

https://blog.csdn.net/u010285974/article/details/107808108

https://mp.weixin.qq.com/s/wThfD9th9e_-YGHJJ3HXNQ

https://mp.weixin.qq.com/s/O084fYzUFk7jAzJ2DDeADg

https://mp.weixin.qq.com/s/ZX8Jluh-RgJXcVh3OvycRQ

https://www.netfilter.org/projects/conntrack-tools/downloads.html

如有侵权请联系:admin#unsafe.sh