2024-5-20 15:3:3 Author: hackernoon.com(查看原文) 阅读量:0 收藏

Authors:

(1) Xiaofei Sun, Zhejiang University;

(2) Xiaoya Li, Shannon.AI and Bytedance;

(3) Shengyu Zhang, Zhejiang University;

(4) Shuhe Wang, Peking University;

(5) Fei Wu, Zhejiang University;

(6) Jiwei Li, Zhejiang University;

(7) Tianwei Zhang, Nanyang Technological University;

(8) Guoyin Wang, Shannon.AI and Bytedance.

Table of Links

LLM Negotiation for Sentiment Analysis

Abstract

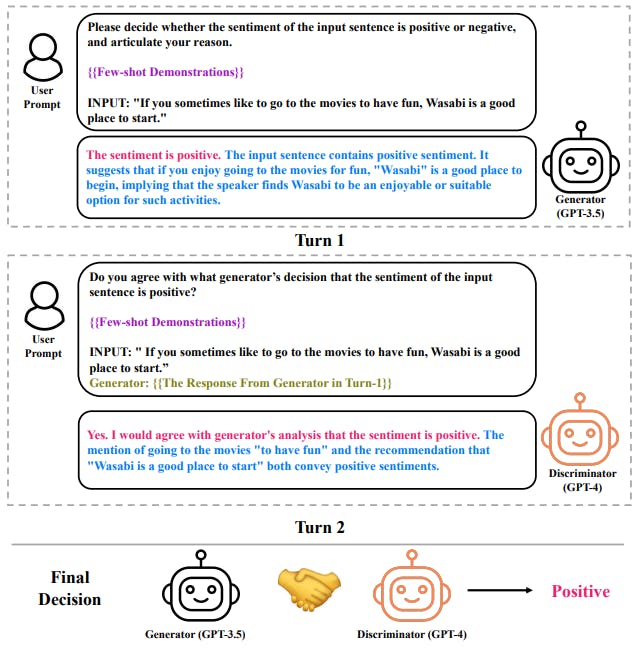

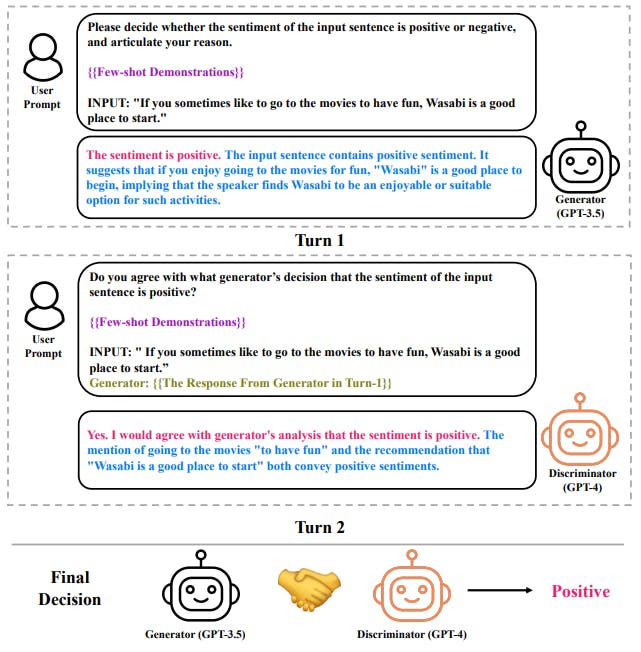

A standard paradigm for sentiment analysis is to rely on a singular LLM and makes the decision in a single round under the framework of in-context learning. This framework suffers the key disadvantage that the single-turn output generated by a single LLM might not deliver the perfect decision, just as humans sometimes need multiple attempts to get things right. This is especially true for the task of sentiment analysis where deep reasoning is required to address the complex linguistic phenomenon (e.g., clause composition, irony, etc) in the input.

To address this issue, this paper introduces a multi-LLM negotiation framework for sentiment analysis. The framework consists of a reasoning-infused generator to provide decision along with rationale, a explanationderiving discriminator to evaluate the credibility of the generator. The generator and the discriminator iterate until a consensus is reached. The proposed framework naturally addressed the aforementioned challenge, as we are able to take the complementary abilities of two LLMs, have them use rationale to persuade each other for correction.

Experiments on a wide range of sentiment analysis benchmarks (SST-2, Movie Review, Twitter, yelp, amazon, IMDB) demonstrate the effectiveness of proposed approach: it consistently yields better performances than the ICL baseline across all benchmarks, and even superior performances to supervised baselines on the Twitter and movie review datasets.

1 Introduction

Sentiment analysis (Pang and Lee, 2008; Go et al., 2009; Maas et al., 2011a; Zhang and Liu, 2012; Baccianella et al., 2010; Medhat et al., 2014; Bakshi et al., 2016; Zhang et al., 2018) aims to extract opinion polarity expressed by a chunk of text. Recent advances in large language models (LLMs) (Brown et al., 2020; Ouyang et al., 2022; Touvron et al., 2023a,b; Anil et al., 2023; Zeng et al., 2022b; OpenAI, 2023; Bai et al., 2023) open a new door for the resolving the task (Lu et al., 2021; Kojima et al., 2022; Wang et al., 2022b; Wei et al., 2022b; Wan et al., 2023; Wang et al., 2023; Sun et al., 2023b,a; Lightman et al., 2023; Li et al., 2023; Schick et al., 2023): under the paradigm of in-context learning (ICL), LLMs are able to achieve performances comparable to supervised learning strategies (Lin et al., 2021; Sun et al., 2021; Phan and Ogunbona, 2020; Dai et al., 2021) with only a small number of training examples.

Existing approaches that harness LLMs for sentiment analysis usually rely on a singular LLM, and make a decision in a single round under ICL. This strategy suffers from the following disadvantage: the single-turn output generated by a single LLM might not deliver the perfect response: Just as humans sometimes need multiple attempts to get things right, it might take multiple rounds before an LLM makes the right decision. This is especially true for the task of sentiment analysis, where LLMs usually need to articulate the reasoning process to address the complex linguistic phenomenon (e.g., clause composition, irony, etc) in the input sentence.

To address the this issue, in this paper, we propose a multi-LLM negotiation strategy for sentiment analysis. The core of the proposed strategy is a generator-discriminator framework, where one LLM acts as the generator (G) to produce sentiment decisions, while the other acts as a discriminator (D), tasked with evaluating the credibility of the generated output from the first LLM. The proposed method innovates on three aspects: (1) Reasoning-infused generator (G): an LLM that adheres to a structured reasoning chain, enhancing the ICL of the generator while offering the discriminator the evidence and insights to evaluate its validity; (2) Explanation-deriving discriminator (D); other LLM designed to offer post-evaluation rationales for its judgments; (3) Negotiation: two LLMs act as the roles of the generator and the discriminator, and perform the negotiation until a consensus is reached.

This strategy harnesses the collective abilities of the two LLMs and provide with the channel for the model to correct imperfect responses, and thus naturally resolves the issue that a single LLM cannot yield the correct decision on its first try.

The contributions of this work can be summarized as follows: 1) we provide a novel perspective on how sentiment analysis can benefit from multi-LLM negotiation. 2) we introduce a Generator-Discriminator Role-switching DecisionMaking framework that enables multi-LLM collaboration through iteratively generating and validating sentiment categorizations. 3) our empirical findings offer evidence for the efficacy of the proposed approach: experiments on a wide range of sentiment analysis benchmarks (SST-2, Movie Review, Twitter, yelp, amazon, IMDB) demonstrate that the proposed method consistently yields better performances than the ICL baseline across all benchmarks, and even superior performances to supervised baselines on the Twitter and movie review datasets.

如有侵权请联系:admin#unsafe.sh